RDEL #143: How does deepening AI fluency change what it means to be a software developer?

Research reveals a four-stage journey from skeptic to strategist, and a new "creative director of code" identity.

Welcome back to Research-Driven Engineering Leadership. Each week, we pose an interesting topic in engineering leadership and apply the latest research in the field to drive to an answer.

With the surge in AI adoption across engineering teams, developers consistently report something unsettling: they’re using AI more while spending less time on the work they find meaningful. That gap between what AI accelerates and what makes the job feel worth doing is starting to reshape what developers consider their craft. This week we ask: How does deepening AI fluency change what it means to be a software developer?

The context

A developer’s week looks less like writing code and more like everything around it. Recent time-allocation research shows that engineers spend only about 14% of their week actually coding. The rest is split across meetings, security and compliance, debugging, system design, and a long tail of coordination work. Most developers want a different distribution: more time on problem solving, learning, and visible progress, and less on reactive work. As that gap widens, satisfaction and productivity decline.

AI was supposed to close that gap. The promise was that automating toil would free up time for meaningful work. In practice, adoption has surged while the gap has stayed open, or even grown. That suggests the framing of “what tasks could be automated?” may have been the wrong question from the start. The more useful question is what makes work meaningful to developers in the first place, and whether the tools we’re handing them actually touch those parts of the job.

The research

Researchers from Oregon State, GitHub, Microsoft, and UC Irvine combined large-scale mixed-methods surveys of 484 and 860 developers with in-depth interviews of 22 AI-fluent practitioners to map how developer work, identity, and AI use intersect. They examined how developers appraise their tasks, where they want AI involved, and how identity shifts as AI fluency deepens.

Key findings include:

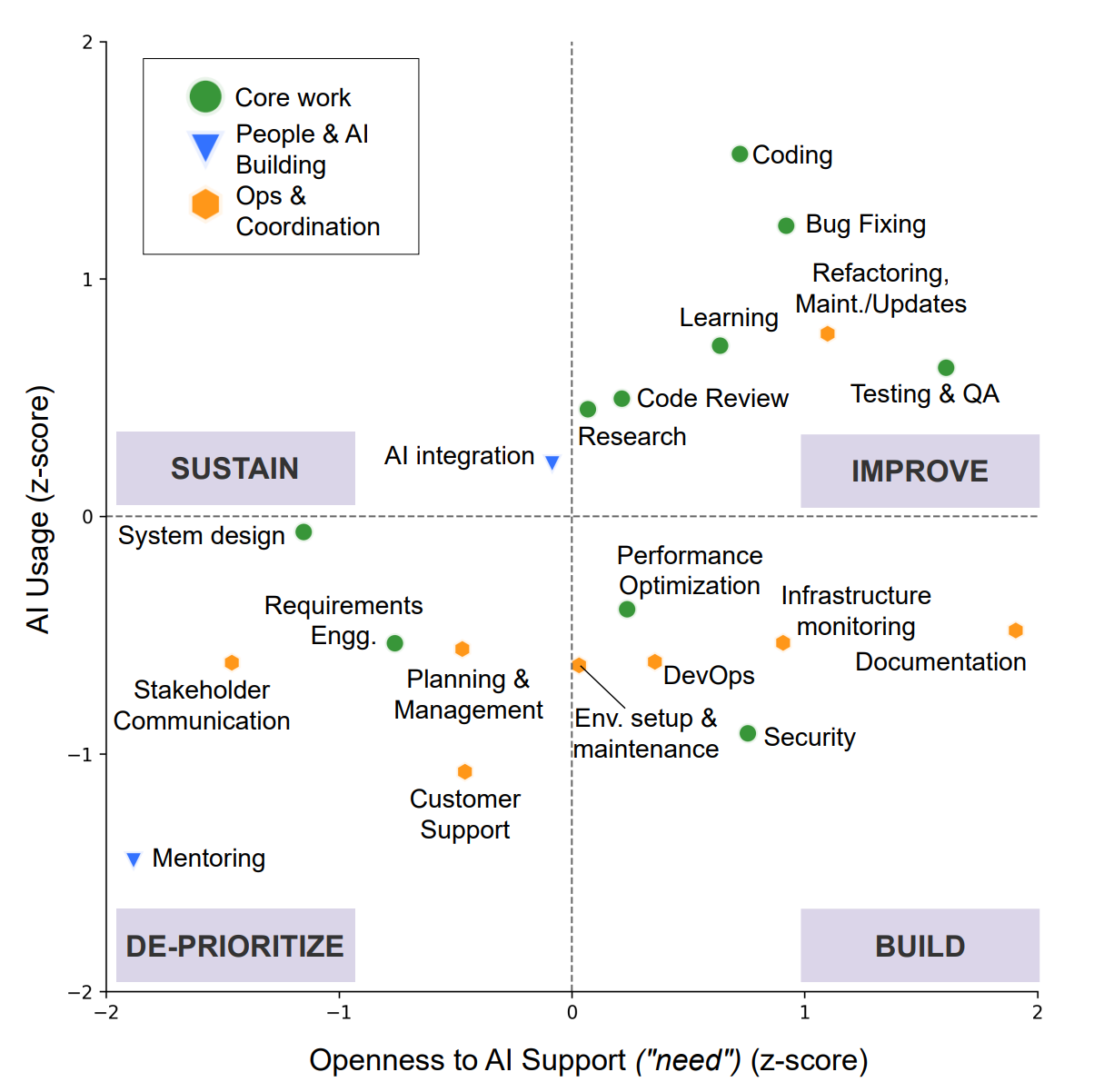

Developers appraise their work along four dimensions: value, identity, accountability, and demands. These cluster work into three buckets: Core Work (coding, debugging, system design), Ops & Coordination (DevOps, documentation, stakeholder comms), and People & AI Building (mentoring, AI integration). Each cluster has a very different appetite for AI (covered in detail in a previous RDEL).

Where developers want AI is shaped by identity, not by tedium. Mentoring, AI integration, and relational coordination work landed in the “deprioritize” zone of the AI opportunity space, not because they’re easy, but because developers deliberately kept them close to protect their growth and authenticity. The pattern: developers weren’t trying to do less work; they were protecting the parts that made it worth doing.

For Core Work, the barrier to deeper AI adoption is trust, not reluctance. Developers were genuinely open to substantial AI help with test generation, cross-artifact debugging, and architecture-aware refactoring. Current tools just fell short on “reliability, transparency, and contextual alignment.” That’s a tractable engineering problem, not a cultural one.

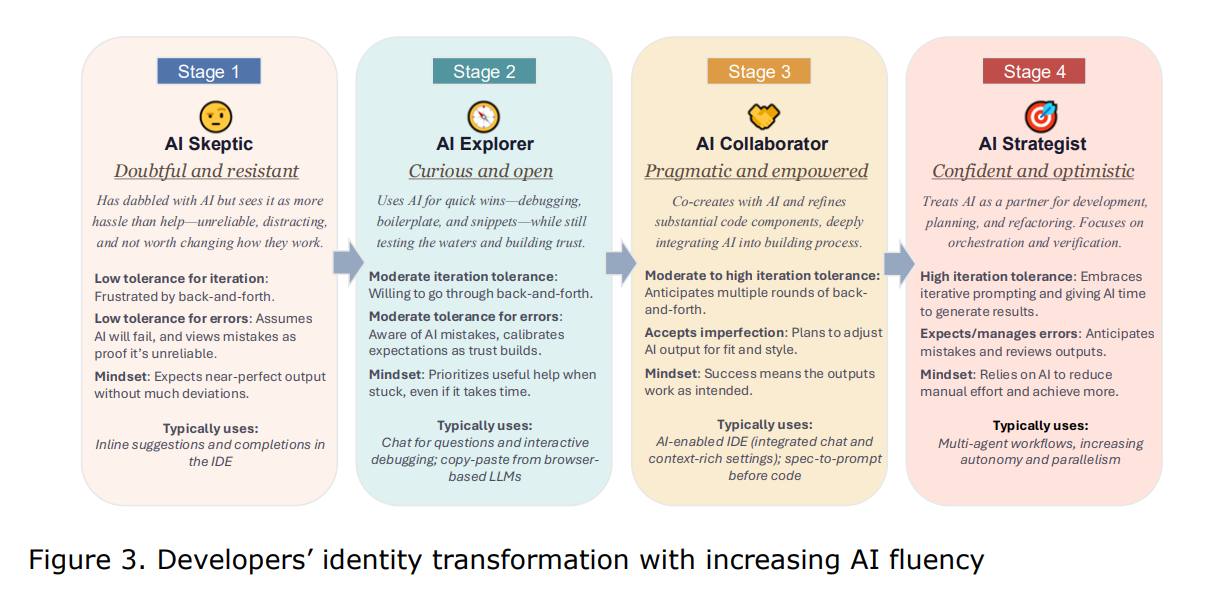

AI fluency develops in four stages: Skeptic, Explorer, Collaborator, Strategist. Skeptics treat each AI failure as confirmation. Explorers accumulate small wins that build trust. Collaborators bring AI in from the start as a thought partner (”we go back and forth”). Strategists orchestrate multi-agent workflows and feel energized, not threatened, by AI writing most of the code.

A new identity is emerging: “creative director of code.” Strategists practice their craft at a different level of abstraction, organized around three pillars: Vision (front-loading shared context with agents), Direction (decomposing work into specs and orchestrating execution), and Verification (continuous, rigorous review). The highest-value skill becomes knowing what to build, why it matters, and whether what was built is right.

Even optimistic adopters worry about what’s being lost. The “learning paradox” (am I getting better at engineering, or just better at prompting?) and concerns about how juniors learn when AI writes most of the code came up repeatedly. Verification is the mechanism for retaining accountability, but only if velocity pressure doesn’t quietly erode it.

The application

The useful question isn't "what can AI automate?" but whether our tools have earned the trust to touch what developers find meaningful. Developers aren't resisting AI blindly, but rather making rational bets about what to protect. Leaders who design integration around those bets will see deeper adoption than those chasing pure code-volume metrics.

Here are a few ways engineering leaders can act on these findings:

Map your team’s AI opportunity space before scaling adoption. Don’t just ask which tasks are tedious. Ask which tasks carry identity weight and which carry toil weight. Push AI hardest into Ops & Coordination toil (environment provisioning, telemetry, routine maintenance) where developers already want help, and let engineers opt into how deeply AI touches their core work. Assess your organization’s AI maturity to understand where gaps likely fall.

Invest in trust-building practices for Core Work. Reliability, transparency, and contextual alignment are the blockers, not appetite. Set norms around layered verification, encourage developers to triage AI output the way they would lint errors, and budget time for the iteration loops that move teams from Explorer to Collaborator.

Protect the on-ramp for junior engineers. If AI handles the boilerplate that juniors used to learn from, design new learning paths: deliberate pairing with senior engineers, unaided practice on debugging and refactoring, and structured exposure to system design tradeoffs. Don’t let velocity pressure erode the conditions that produce the next generation of strategists.

—

The transformation is already underway. What we measure and reward over the next two years will shape which of these futures we end up in.

Happy Research Tuesday!

Lizzie